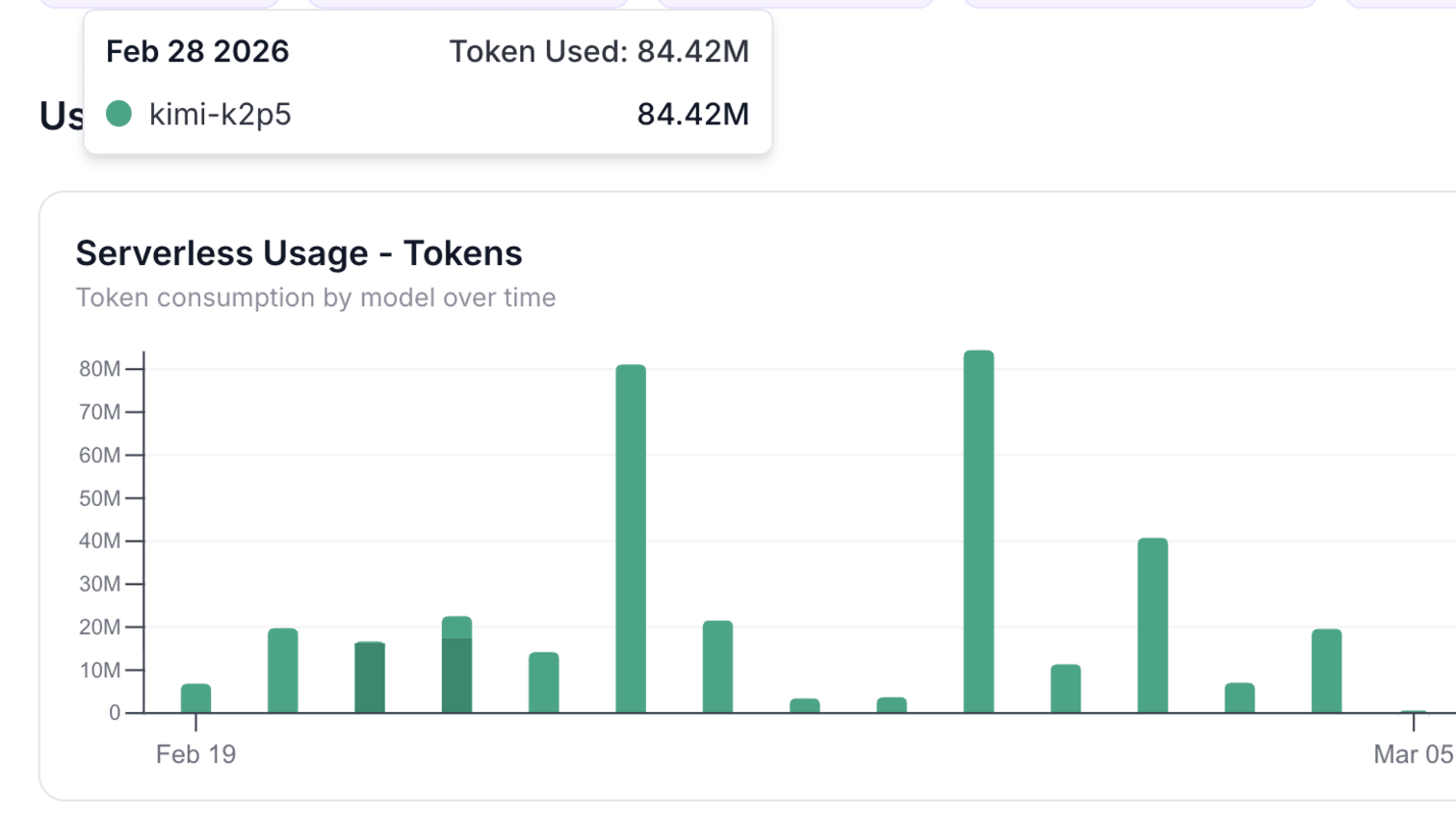

I burned 84 million tokens on February 28th. Researching companies, drafting memos, running agents.

That’s running Kimi K2.5, a serverless model via API. At Claude1 or OpenAI2 rates — roughly $9 per million tokens blended — equivalent usage would cost $756 for a single day’s work. My peak days hit 80 million tokens. My average days run 20 million. Cloud inference at frontier-model pricing adds up fast.

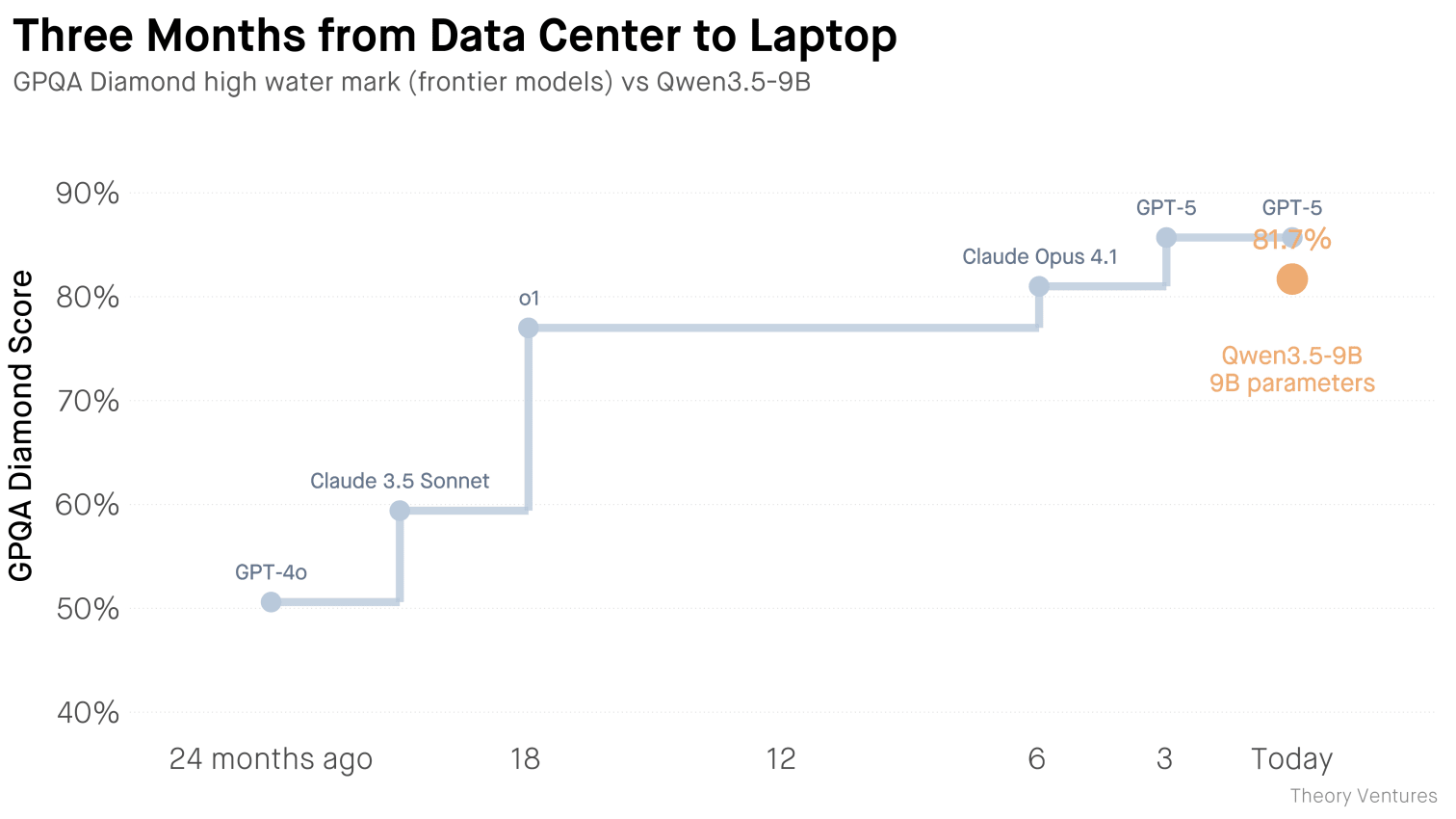

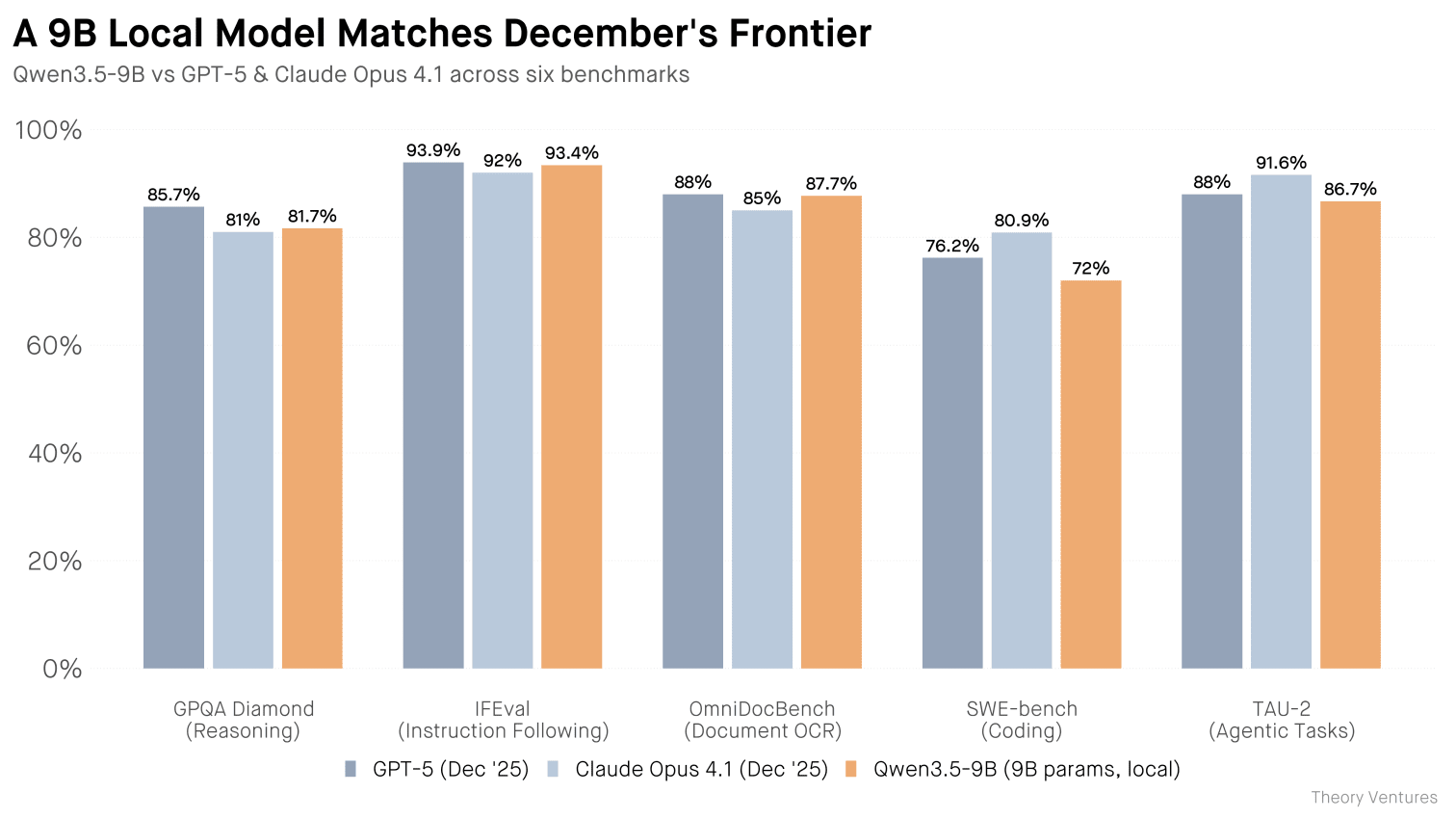

This week, Alibaba released Qwen3.5-9B3, an open-source model that matches Claude Opus 4.1 from December 2025. It runs locally on 12GB of RAM. Three months ago, this capability required a data center. Now it requires a power outlet.

A $5,000 laptop — a MacBook Pro with enough memory to run Qwen locally — pays for itself after 556 million tokens. At my usage rate, that’s about a month. At 20 million tokens per day, it’s four weeks.

After payback, the marginal cost drops to electricity.

It isn’t an intelligence compromise. Reasoning, coding, agentic workflows, document processing, instruction following : the 9B model matches December’s frontier across the board.

What changes when frontier intelligence runs locally? Everything I send to cloud APIs today — drafting emails, researching companies, writing code, analyzing documents — stays on my machine. No API logs. No third-party retention. No outages. No rate limits.

The tradeoff is parallelization. Cloud APIs handle thousands of concurrent requests. A laptop runs one inference at a time. For simple tasks — summarization, drafting, Q&A — that’s fine.

Queue them up. Let them run overnight. For complex agentic workflows that spawn dozens of parallel threads, local inference may not be worth the wait. The economics favor depth over breadth : fewer tasks, run longer, run cheaper.

Three months from data center to laptop. The buy-vs-rent math just changed.