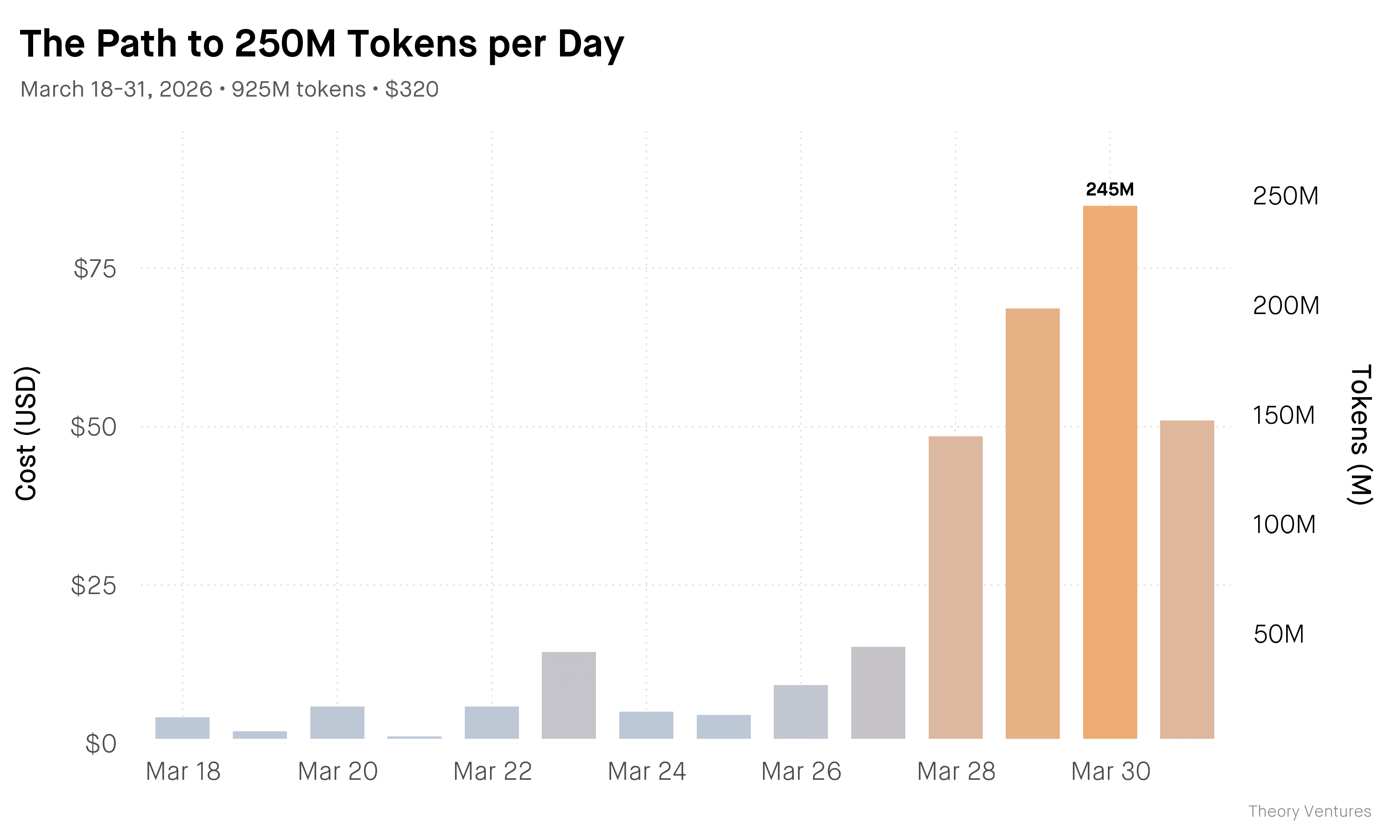

Two days ago, I burnt 250 million tokens in a single day.

That’s up 20x in six weeks. This idea, called tokenmaxxing, is the deliberate practice of maximizing token consumption. The question : how much electricity can we turn into useful work?

The secret is parallelization. Structure a plan at the start of the day that allows multiple agents to work simultaneously. METR research shows the latest models can now work autonomously for 12 hours, up from 1 hour a year ago. Here’s the ramp once I started implementing a daily plan :

So, what did I do two days ago? Here’s one example. I prepared a presentation for the AI Engineers Tech Talk on the infrastructure for building with agents that I’m delivering tonight.

One agent pulled git commit history from the code repository & generated a lines-of-code chart. Another queried the agent error logs & built a time series of agent failures by root cause. A third fact-checked the METR research citations. A fourth built the presentation using a JavaScript library. A fifth critiqued the overall flow & content. All of this happened in the background.

This was just one of the parallel flows in a day. The productivity ceiling? Still unmaxxed.